SRCLID:Performance optimized codes for multiscale modeling

Contents |

CI Team Members

Florina M. Ciorba

Task objective

Codes validation and performance optimization of simulation codes for multiscale modeling to increase the productivity of scientists and engineers at CAVS. The goals of this task are:

- understanding and improving the simulation codes run times

- guiding the use of high performance hardware

- improving the performance modeling techniques

- to identify the simulation codes that benefit from dynamic load balancing

- improve the performance of the codes on the high performance computing systems at MSU-HPC2 to accelerate single-scale simulations

- leverage the computing resources to execute simultaneously more simulations that are independent of each other

Impact

The impact of this work is immediate for the users of the original non-optimized simulation codes, while the benefits are multifold: faster time to solutions, efficient and portable codes, computational performance predictions, and accuracy results.

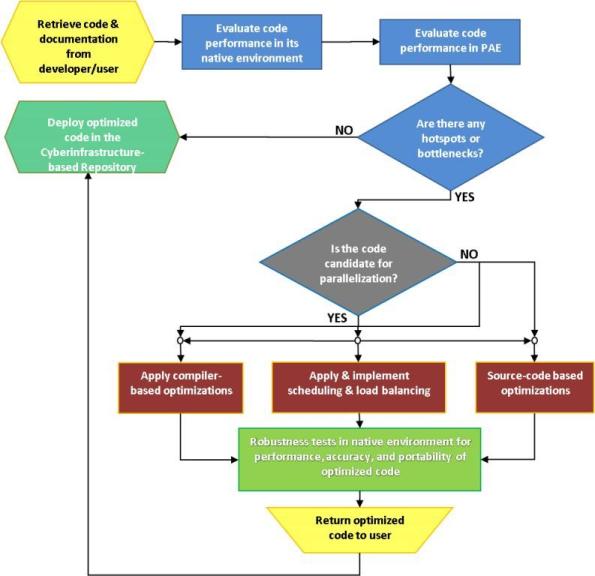

Approach

Performance optimization of programs requires careful program analysis and very good understanding of the inner-workings of the problem being solved/simulated. Traditional approaches (of > 35 years ago) such as printf(), time(), clock(), or `-pg’ and gprof usually employed by the computational scientists are extremely tedious, and are not able to provide the profound code insights required for optimal performance. State-of-the-art measurements, profiling, visualization and analysis tools will facilitate and speed up the process of performance optimization of scientific applications. Moreover, it will enable fast prototyping and improvement of parallelization strategies for the most recent high performance computing (HPC) systems. Based on such tools, a performance analysis and tuning methodology will be developed for optimizing the performance of parallel scientific programs. The long term focus will be on enabling fast multiscale simulations on HPC systems, that will use codes from the cyberinfrastructure’s repository of computational models.

The goals of this task will be achieved via the following approach:

- Use of performance analysis tools as well as the development and application of a general methodology for tuning and optimization of computational performance of relevant codes

- Development and application of efficient parallelization strategies to improve the performance of codes in stable and unstable computing environments

Performance Analysis Environment (PAE)

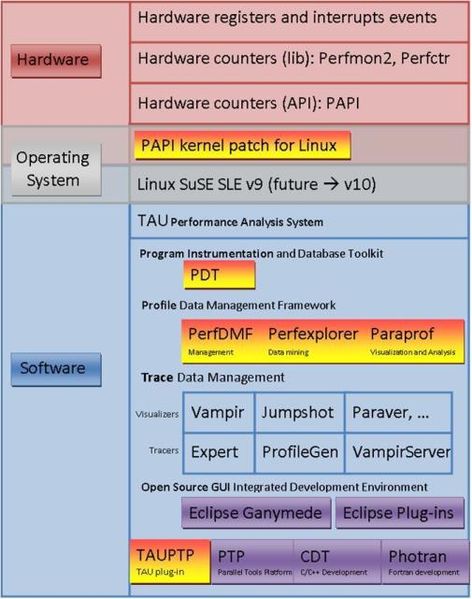

In order to model the performance of the simulation codes we established a Performance Analysis Environment (PAE), which employs open-source packages in order to enable the profiling, analysis, evaluation and tuning of the computational performance of any serial or parallel computer program written in Fortran, C, C++, Java, Python, with support for both shared memory parallel libraries (OpenMP) and distributed memory message passing libraries (MPI). We follow a thorough methodology for optimizing the performance of multiscale simulation codes in PAE.

PAE transcends the following layers of a computing system:

- Hardware

- Operating System

- Software

PAE’s most important packages:

- PAPI – Performance API for accessing hardware performance counters available on modern microprocessors

- TAU – a profiling and tracing toolkit for performance analysis of serial & parallel computer programs written in Fortran, C, C++, Java, Python.

- Eclipse – Open Integrated Development Environment

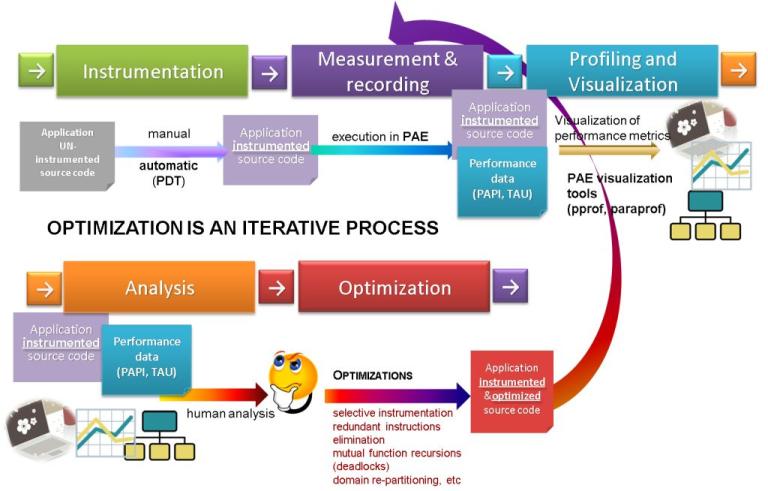

Performance optimization of codes using PAE

The five steps of the performance analysis and tuning process are:

- Instrumentation

- Measurement and recording

- Profiling and Visualization

- Analysis

- Optimization

Performance optimization of codes is an iterative process, therefore the above steps will be repeated until the desired performance is achieved.

Deliverables

The outcome of this research team constitutes an effective and efficient solution for performing fast multiscale simulations.

- Computationally optimized simulation codes (Modules 1-5)

- Stable and portable simulation codes (Modules 1-5)

- Accurate computational performance predictions and numerical accuracy guarantees for simulation codes (Modules 1-5)

Performance Analysis Environment (First time in MSU-HPC2)

- Uses PAPI, TAU and Eclipse for profiling and tracing the performance of serial & parallel computer programs written in Fortran, C, C++, Java, Python.

- Platform: CentOS Linux on Core2 Duo laptop

- Testbeds: microstructure mapping code, discrete dislocations dynamics code

Optimized Simulation Codes

- Microstructure grain mapping (MCgui: serial): Messoscale simulation code used in SRCLID Task 1

- Micromegas: Microscale simulation code used in SRCLID Tasks 1 and 4

- Compiler optimization (mM 1.0)

- FORCE subroutine: calculating the force between the dislocation segments in Micromegas for 3D discrete dislocations (mMpar 1.0: using OpenMP, on 4 CPUs)

Code current being optimized

- Micromegas: Microscale simulation code used in SRCLID Tasks 1 and 4

- UPDATE subroutine: handling the dislocations interactions in Micromegas for 3D discrete dislocations (mMpar 2.0: using OpenMP, on 4-12 CPUs) [work in progress]

Codes to be optimized

- Molecular Dynamics module (nano scale) to be used in Tasks 1, 4, 5

- Quantum Scale module to be used in Task 6

Accomplishments

Publications

- F. M. Ciorba, S. Groh, M. F. Horstemeyer, “Parallelizing discrete dislocation dynamics simulations on multi-core systems", International Conference on Computational Science (ICCS 2010) - Workshop on Tools for Program Development and Analysis in Computational Science

- S. Srivastava, I. Banicescu, and F. M. Ciorba “Investigating the Robustness of Adaptive Dynamic Loop Scheduling on Heterogeneous Computing Systems”. IEEE International Parallel and Distributed Processing Symposium (IPDPS-PDSEC) 2010, Atlanta, GA

- I. Banicescu, F. M. Ciorba and R. L. Cariño, “Towards the robustness of dynamic loop scheduling on large-scale heterogeneous distributed systems”, 8th International Symposium on Parallel and Distributed Computing (ISPDC 2009), July 1 – 3, 2009, Lisbon, Portugal

- F. M. Ciorba, S. Groh, M. F. Horstemeyer, “Early experiences and results on parallelizing discrete dislocation dynamics simulations on multi-core architectures", MSU.CAVS.CMD.2010-R0002

- R. L. Cariño, F. M. Ciorba, Q. Ma, and E. B. Marin, "Improving the performance and usability of a microstructure mapping code". MSU.CAVS.CMD.2009-R0023

- F. M. Ciorba, R. L. Cariño, and I. Banicescu, "Towards the robustness of high-performance execution of multiscale numerical simulation codes hosted by the Cyberinfrastructure of CAVS @ MSU". MSU.CAVS.CMD.2009-R0008

Proposals

- NSF: T. Haupt, F. M. Ciorba, S. F. Ghafoor, “Modeling and Simulations of Adaptive Parallel Systems", Proposal submitted in response to the NSF CISE Solicitation No. NSF 09-556 [in review]

- DOE: R. L. Carino, T. Haupt, F. M. Ciorba, “Cyberinfrastructure for Materials Engineering at Quantum, Namo and Macro Length Scales", Preapplication proposal submitted in response to the DOE EPSCoR Solicitation No. DE-PS02-09ER09-11 [in review]